How legitimate AI tools can be misused for data exfiltration — and what organizations can do to stay protected.

Introduction: The Hidden Risk of AI Adoption

Artificial intelligence is transforming modern businesses. From process automation to advanced data-driven decision-making, AI tools are becoming part of everyday operations across industries.

But as adoption grows, so does a new and often overlooked security risk: LotAI — a technique in which legitimate AI tools are misused for data exfiltration.

For IT leaders, developers, and organizations, one key question is becoming increasingly urgent:

How secure is your data when AI tools are in use?

This article explains what LotAI is, how it works, why it poses a serious threat, and what organizations can do to protect themselves.

What Is LotAI?

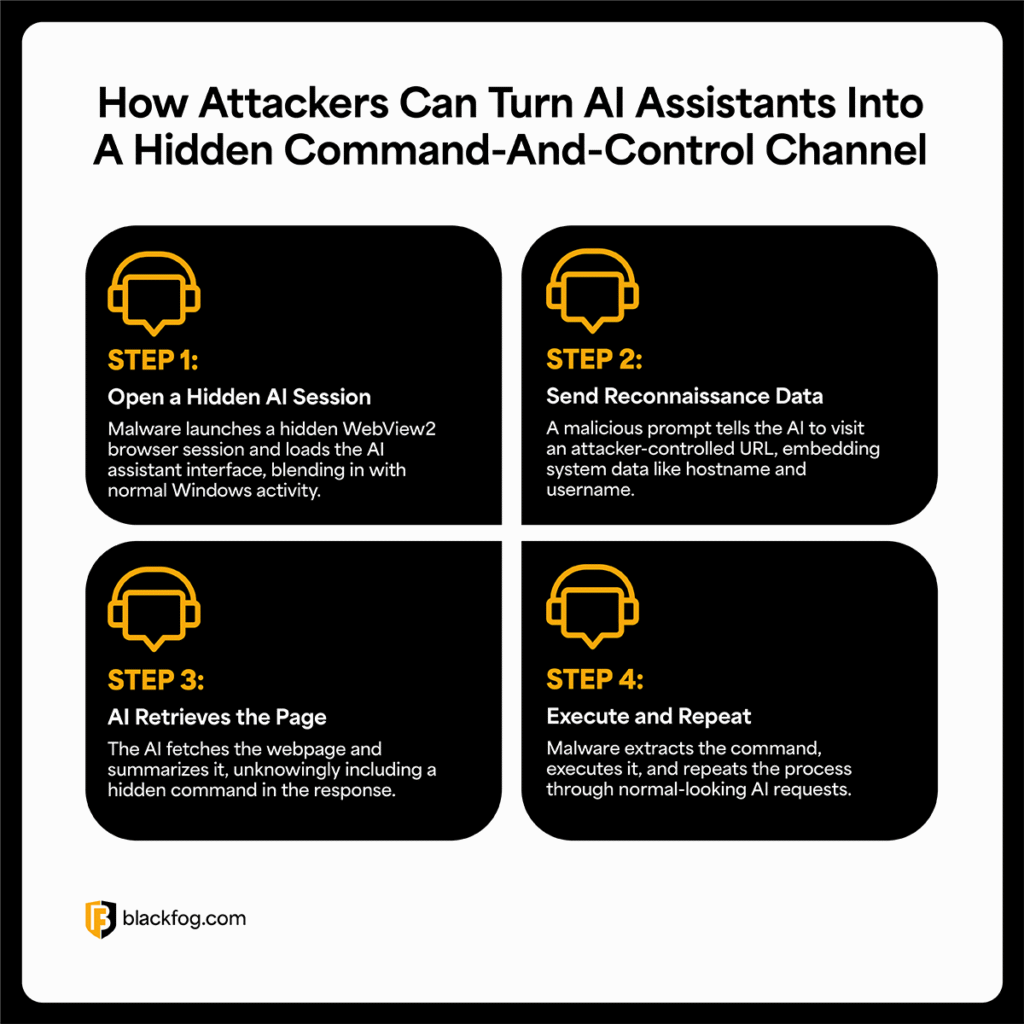

LotAI stands for Living off the AI. It describes a modern attack method in which legitimate AI tools are exploited to extract sensitive information.

Instead of using traditional malware, attackers take advantage of trusted platforms such as chatbots, AI assistants, and APIs to access or transfer data in ways that may appear harmless.

How LotAI Works

- Leveraging legitimate AI tools such as chatbots and APIs

- Embedding data extraction into seemingly harmless prompts

- Bypassing traditional security controls

- Extracting sensitive business information

Unlike conventional malware, LotAI does not require software installation. It exploits systems and tools that are already in use.

Why LotAI Is a Serious Threat to Businesses

1. Invisible Attacks

AI-driven attacks are especially difficult to detect because they:

- Use legitimate tools and services

- Leave no typical malware signatures

- Often resemble normal user behavior

2. Bypassing Traditional Security Systems

Conventional security solutions such as firewalls and antivirus software are not designed to detect prompt-based abuse.

- Cannot easily classify legitimate API requests as malicious

- May fail to recognize data leakage through AI interactions

- Lack visibility into prompt-based attack patterns

3. Insider-Like Behavior

LotAI can behave like an internal user by:

- Accessing sensitive data through authorized tools

- Operating within normal-looking workflows

- Avoiding suspicion by blending into legitimate activity

Real-World IT Use Cases

Example 1: Data Leakage via AI Chatbots

An employee uses an AI chatbot to optimize code or troubleshoot a technical issue. During that process:

- Sensitive source code is entered into the tool

- Data may be processed externally

- Intellectual property can be exposed

Example 2: API Abuse

An attacker exploits:

- Open AI API endpoints

- Automated prompt workflows

- Weak access control configurations

Result: continuous and difficult-to-detect data extraction.

Example 3: Prompt Injection

Manipulated inputs can cause AI systems to:

- Reveal confidential information

- Ignore built-in security instructions

- Bypass intended safeguards

Detecting LotAI and Data Exfiltration

Because LotAI often hides behind legitimate usage, organizations need stronger visibility into how AI tools are being used.

Warning Signs

- Unusual volumes of AI-related requests

- Large data inputs within prompts

- Unexplained outbound data traffic

- Use of unauthorized AI tools

Monitoring Approaches

- API tracking

- Prompt analysis

- Data Loss Prevention (DLP)

- Network and endpoint monitoring

Best Practices: How to Protect Your Organization

1. Establish AI Governance

Create clear policies for the use of AI within the organization.

- Define approved tools

- Set usage boundaries

- Prevent shadow AI

- Align AI adoption with security policies

2. Implement Zero Trust

Use strict access controls and verification mechanisms. A Zero Trust approach helps minimize unnecessary access, reduce misuse, and eliminate assumptions of trust.

3. Classify Data

Identify which information is sensitive and apply proper restrictions so confidential business data is not casually exposed to AI systems.

4. Train Employees

Employees should understand the risks of entering sensitive data into AI tools, how prompt-based attacks work, which tools are allowed, and how to use AI securely in daily work.

5. Deploy Technical Controls

- Endpoint protection

- Network monitoring

- DLP solutions

- AI-specific security controls

Strategic Recommendations for IT Leaders

- Manage AI usage proactively rather than banning it outright

- Adapt security architecture to account for AI-based risks

- Conduct continuous risk assessments

- Work with experienced cybersecurity providers

The goal is not to stop AI adoption, but to secure it properly.

FAQ on LotAI and AI Security

What does LotAI mean?

LotAI stands for Living off the AI. It refers to the misuse of legitimate AI tools for cyberattacks, especially data exfiltration.

Are all AI tools insecure?

No. AI tools are not inherently insecure. However, without governance, monitoring, and access controls, they can introduce significant risks.

How can I detect AI-based data exfiltration?

Organizations can improve detection by monitoring API usage, tracking data flows, analyzing prompts, and identifying unusual behavior patterns.

Which industries are most affected?

Industries with sensitive data and high AI adoption are particularly exposed, including IT and software development, financial services, healthcare, and manufacturing.

What is the biggest risk factor?

One of the biggest risk factors is uncontrolled employee use of AI tools, often referred to as shadow AI.

Conclusion

AI can create enormous value for businesses, but it also introduces new attack surfaces. LotAI shows how legitimate AI tools can become channels for silent and effective data exfiltration.

Organizations that adopt AI without proper governance and security controls may expose sensitive information without even realizing it.

The answer is not to avoid AI. The answer is to secure its use with the same seriousness applied to every other critical technology in the enterprise.